Key Takeaways

- Generative Engine Optimization (GEO) is the new standard for winning citations in AI-driven search results.

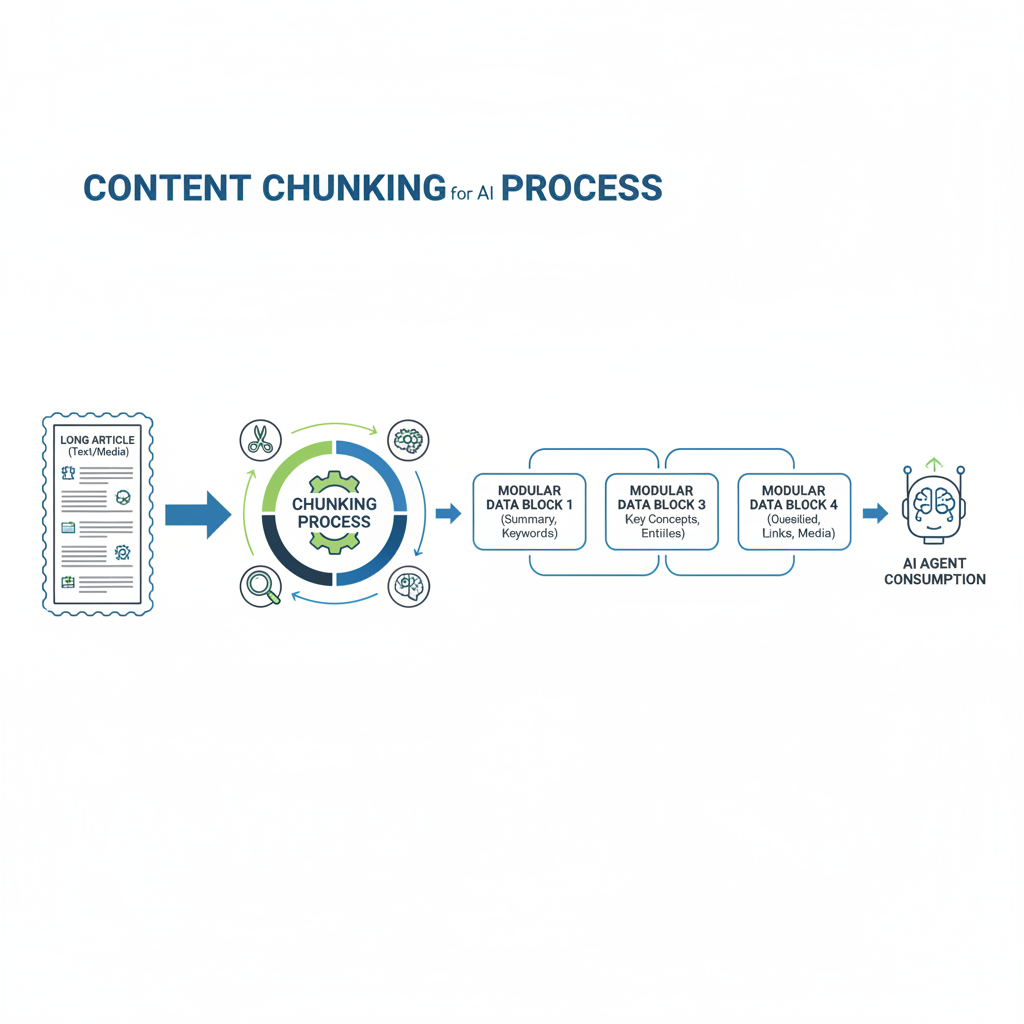

- Content chunking and modular data structures are essential for ingestion by AI agents.

- Automation via n8n enables small to medium-sized businesses to maintain multi-platform visibility without manual chaos.

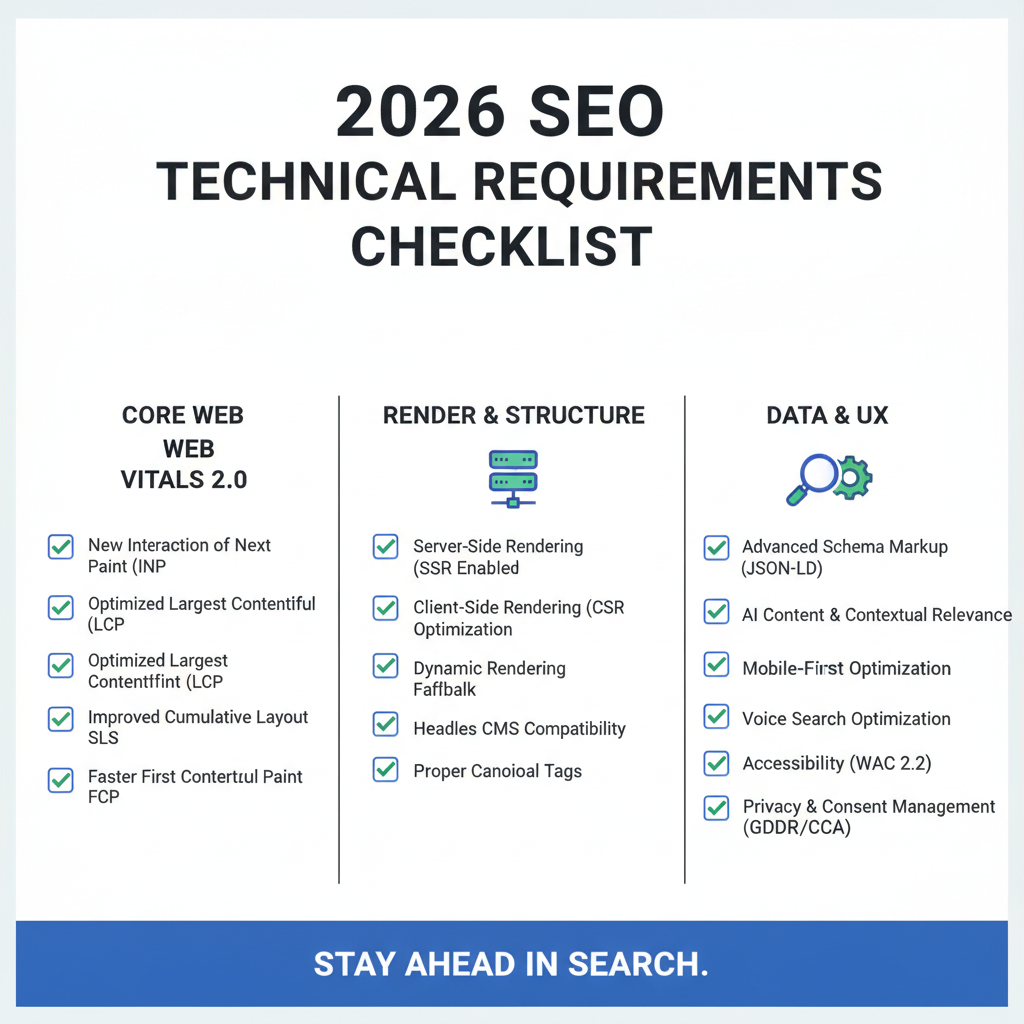

- Server-Side Rendering (SSR) and Schema.org connections provide the technical foundation for 2026 search compliance.

The Shift from SERPs to GEO: How AI Search Actually Works in 2026

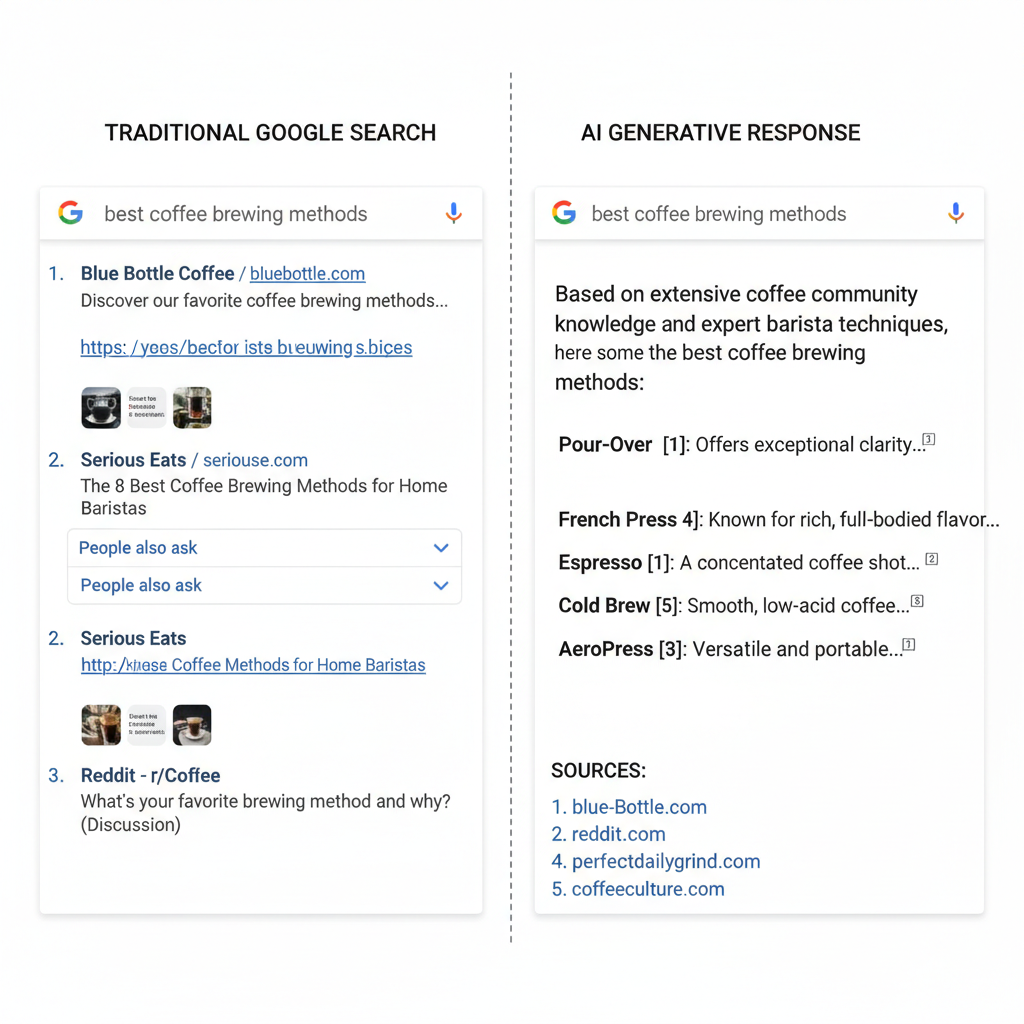

Generative Engine Optimization (GEO) and Answer Engine Optimization (AEO) have redefined how brands achieve visibility on the modern web. In 2026, the goal of search has shifted from ranking in a list of blue links to becoming the primary source cited in a generative summary. This transition is backed by a 26% increase in LLM-based search usage, where users prioritize immediate, synthesized answers over traditional browsing.

The strategic imperative for SMEs is clear: failing to be cited in an AI summary means being invisible to 40% of the active user base. AI agents do not “surf” the web like humans; they ingest structured data and verifiable entities. To stay relevant, businesses must move from manual tinkering to a systemic approach that provides the high-quality data inputs these agents require.

Technical SEO for AI Agents: Chunking and Semantic Clarity

Content chunking is the process of breaking long-form articles into modular, semantic fragments that AI bots can easily parse. In the current search landscape, treating a blog post as a single wall of text is a technical liability. Instead, content must be structured using explicit Schema.org entity connections and delivered via Server-Side Rendering (SSR) to ensure it is immediately available for ingestion by search bots.

| Feature | Human-Readable Content | Agent-Ingestible Data |

|---|---|---|

| Structure | Narrative flow and storytelling | Modular “chunks” and entities |

| Hierarchy | Visual H1-H4 headings | Nested JSON-LD and Schema |

| Loading | Client-side aesthetics | Server-Side Rendering (SSR) |

| Primary Goal | Engagement and time-on-page | Citation frequency and accuracy |

Semantic clarity ensures that the relationship between different topics on your site is unmistakable. While humans enjoy a rhythmic narrative, AI agents prioritize the extraction of specific facts. By implementing modern frameworks like Next.js or ensuring your low-code CMS outputs clean data, you provide the clarity needed for agentic search engines to trust and cite your expertise.

Automating Search Everywhere: From Manual Chaos to Systemic Visibility

Low-code automation using tools like n8n has become the only sustainable way for smaller organizations to compete with enterprise resources. By creating workflows that automatically sync insights from a core article to LinkedIn, TikTok, and other social channels, businesses can maintain a multi-platform presence without constant manual labor. This approach transforms a single piece of content into a distributed visibility network.

Modern automation systems now include SERP monitoring triggers that detect changes in citation patterns. When an AI summary drops a brand’s reference, the system can alert the content team or trigger an automated update to the page’s metadata and Schema. This move from manual chaos to a working system allows teams to spend less time on administration and more on strategic decision-making.

Why Technical Foundations Matter More Than Ever

Information architecture remains the silent driver of success in an AI-dominated search market. Even the most advanced AI models struggle with poorly rendered sites or broken internal link structures. A robust technical foundation ensures that crawl budgets are spent on high-value pages, and that the data presented is authoritative and easy to index.

Technical hygiene is a continuous requirement, not a one-time task. Sites that prioritize high-quality rendering and structured data are cited more frequently because they reduce the computational effort required for search engines to understand their content. This efficiency translates directly into better visibility and more frequent citations in AI responses.

From Manual Chaos to Working Systems

Auditing current workflows is the essential starting point for reclaiming operational efficiency. Many marketing departments are still trapped in the manual labor of generating meta-descriptions, updating internal links, and cross-posting content. These repetitive tasks are prime candidates for low-code automation, allowing your staff to focus on high-level content strategy rather than routine maintenance.

By identifying these friction points, you can implement n8n-based systems that handle the data heavy-lifting. The transition from a chaotic manual process to a structured visibility system is the hallmark of a resilient business in 2026. This systematic approach ensures that every piece of content created is optimized for both the human reader and the AI agents that now gatekeep the search results.

Frequently Asked Questions

What is the difference between SEO and GEO?

Traditional SEO aims to rank a website in a list of results, while Generative Engine Optimization (GEO) focuses on being included as a cited source within an AI-generated answer.

Is content chunking necessary for smaller websites?

Yes. AI agents process information more effectively when it is modular. Regardless of site size, clear structure helps agents parse and cite your specific information accurately.

How does automation help with search visibility?

Automation allows for real-time updates to technical data, such as Schema and metadata, and ensures that content is consistently distributed across multiple platforms without manual effort.

Do we still need human-centric content in 2026?

Human-centric value remains critical. AI agents look for unique insights and expertise to include in their summaries, which can only be provided by skilled human creators.

Your next move

The landscape of search has changed, but the opportunity for growth remains significant for those who adapt. Moving from manual tinkering to a structured, automated system is the most effective way to ensure your brand survives and thrives in the era of GEO. Review your existing content pipeline and identify one repetitive task-such as internal linking or cross-platform syncing-to automate this week.

By establishing a technical foundation based on semantic clarity and modular data, you position your brand to be cited by the very AI agents that users now rely on. Start building your automated visibility system today to move from manual chaos to a reliable, working engine.