Key Takeaways

- Automated SEO testing reduces human error and provides real-time performance insights.

- Strategic validation focuses on technical integrity, semantic relevance, and SERP volatility.

- n8n serves as a powerful orchestrator for connecting SEO tools into a unified testing suite.

- Meaningful SEO A/B testing requires a structured hypothesis and isolated variables.

The Strategic Imperative of Automated SEO Testing

Transitioning from reactive audits to proactive validation is a fundamental shift required for modern digital scaling. Manual SEO checks are inherently prone to human error and often fail to capture the high-frequency changes occurring across large-scale web properties. By architecting an automated testing environment, businesses move from a defensive posture to a strategic one, ensuring that search visibility is maintained through systematic rigor rather than periodic intervention.

| Feature | Manual SEO Testing | Automated SEO Testing |

|---|---|---|

| Scalability | Limited by human hours | Virtually infinite across thousands of URLs |

| Frequency | Monthly or quarterly audits | Continuous, real-time monitoring |

| Accuracy | High risk of oversight | Precision-driven based on logic rules |

| Response Time | Delayed (Post-incident) | Immediate alerts upon deviation |

Foundational Pillars: What to Test in an Automated Workflow

Defining the specific variables for monitoring is the first step in creating a reliable validation system. Automation should not merely collect data; it must validate specific hypotheses regarding technical health and content performance. A sophisticated workflow addresses three core pillars to maintain competitive parity in shifting search landscapes.

Technical Integrity and Crawlability

Maintaining technical health at scale requires a system capable of monitoring status codes, schema markup validity, and Core Web Vitals in real time. Even minor changes to a site architecture can inadvertently block search crawlers or degrade page speed metrics, leading to rapid ranking declines. Automated scripts can verify that every high-priority page remains indexable and that structured data remains compliant with current schema standards.

On-Page Semantic Relevance

Utilizing AI-driven analysis ensures that content remains aligned with evolving search intent and keyword clusters. Search engines frequently refine their understanding of semantic relationships, meaning a page that was once perfectly optimized may lose its relevance over time. Automated workflows can compare existing content against top-performing competitors, identifying gaps in topics or keyword density that require professional adjustment.

Performance Benchmarking

Tracking rank volatility and click-through rates relative to specific site changes provides the data necessary for strategic decision-making. By connecting performance metrics directly to a testing database, businesses can correlate updates-such as metadata changes or internal linking adjustments-with actual movement in the search results. This continuous feedback loop transforms raw search data into actionable business intelligence.

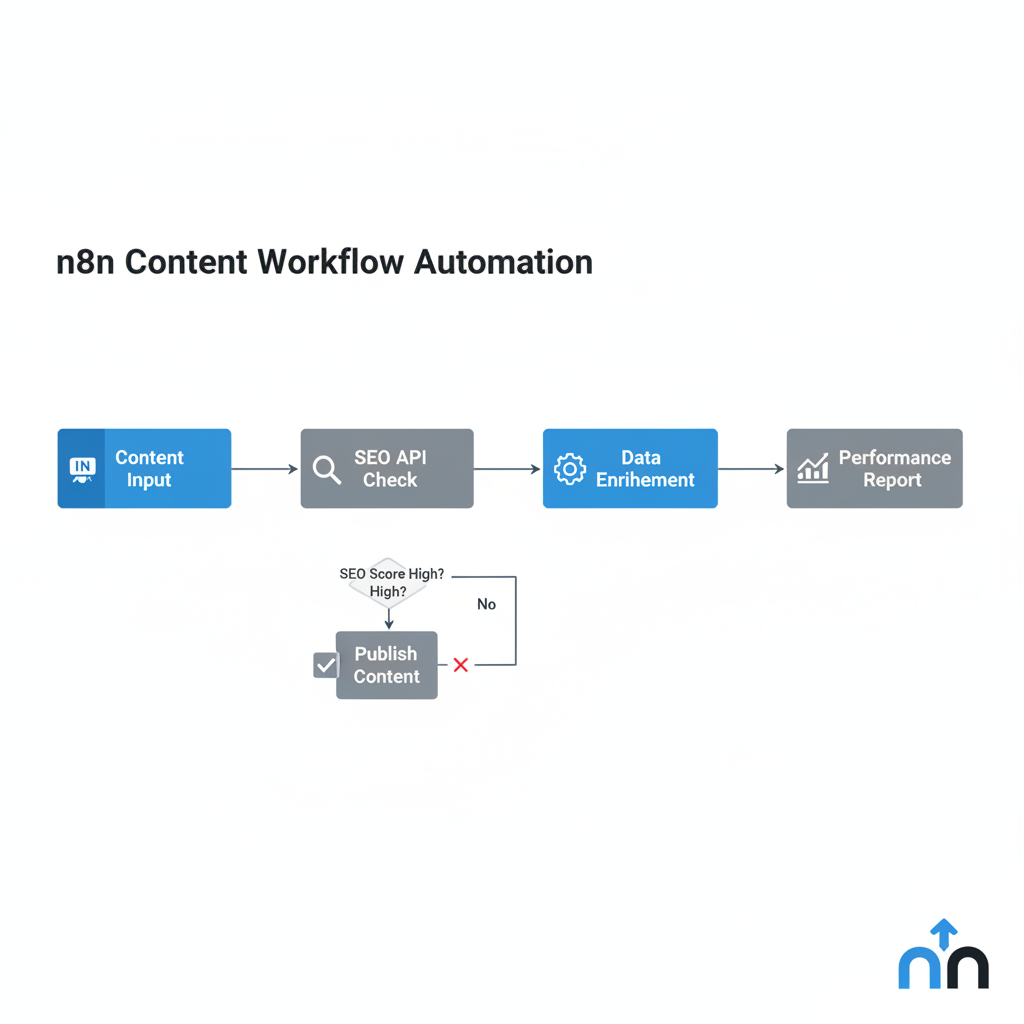

Architecting the Test Environment with n8n

Leveraging n8n as a central orchestrator enables the integration of various SEO APIs, including Ahrefs, SEMrush, and Google Search Console, into a unified workflow. This low-code approach allows for the creation of complex logic paths that do not require extensive traditional programming. For instance, a workflow can be designed to trigger a full technical audit if a primary keyword drops by more than three positions in a single day.

Beyond data collection, n8n excels at routing intelligence to the appropriate stakeholders. When the system detects an anomaly-such as a sudden surge in 404 errors or a loss of rich snippet features-it can automatically generate a ticket in a project management tool or send a prioritized alert to a Slack channel. This ensures that technical debt does not accumulate and that critical issues are resolved before they impact organic traffic revenue.

From Hypothesis to Data: The SEO A/B Testing Framework

Executing controlled experiments is the only way to validate which changes actually drive performance gains. Unlike traditional A/B testing, SEO experiments require the selection of “twin” pages-groups of pages with similar historical traffic and intent. One group serves as the control while the other receives the modification, such as a new header structure or updated meta descriptions.

The success of this framework depends on isolating variables to ensure that changes in performance can be attributed to the specific update. Automation manages this process by monitoring both groups simultaneously and calculating statistical significance. This scientific approach removes the guesswork from SEO strategy, allowing marketing directors to deploy successful changes across the entire site with confidence.

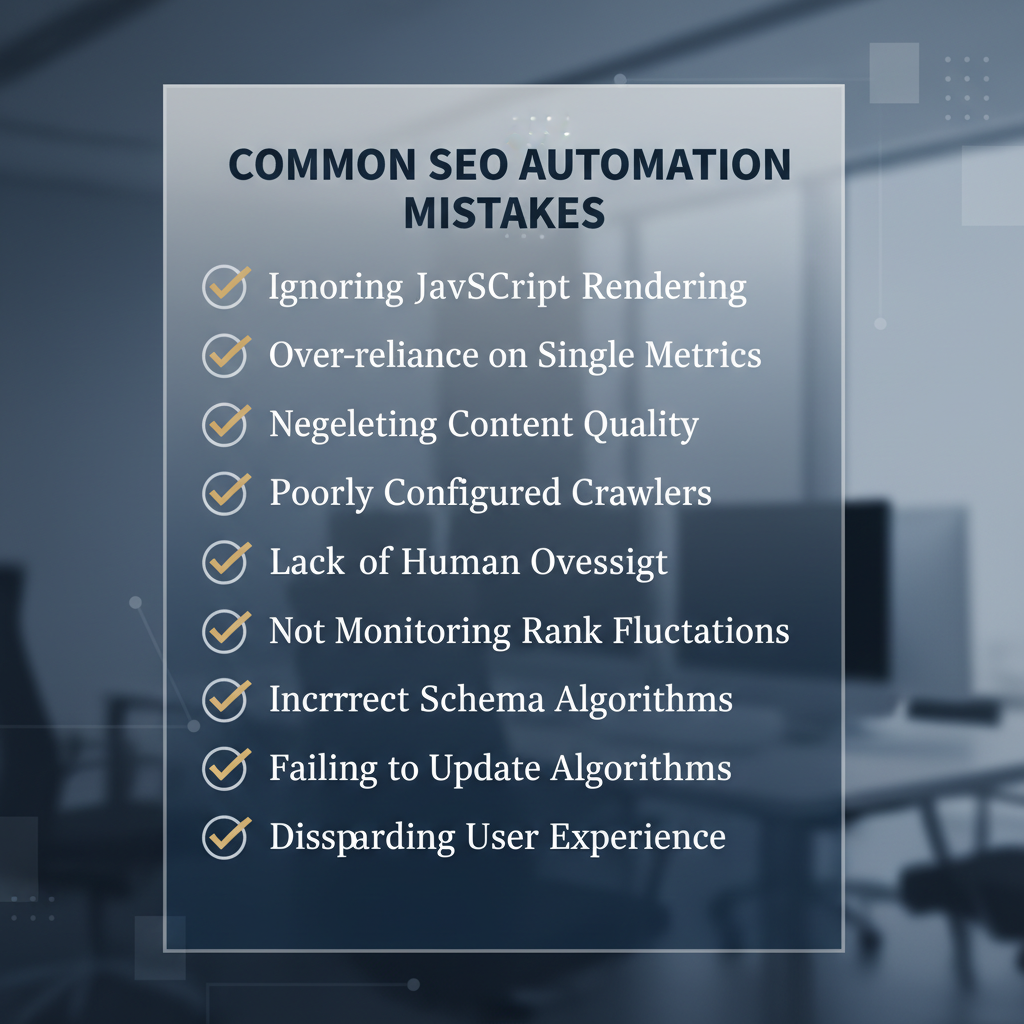

Common Pitfalls in Programmatic SEO Validation

Avoiding over-automation without human oversight is critical for maintaining the integrity of an SEO system. While machines excel at data processing and anomaly detection, they lack the nuanced understanding of brand voice and long-term strategic goals. Relying entirely on automated reports without professional interpretation can lead to a focus on “vanity metrics” rather than high-value conversions.

FAQ

How often should automated SEO tests run?

Critical technical health checks, such as status code monitoring and crawlability audits, should run daily or even hourly for high-traffic sites. Ranking and semantic relevance checks are typically more effective when run on a weekly basis to account for standard SERP volatility.

Do I need extensive coding knowledge to use n8n for SEO?

No, n8n is a low-code platform that utilizes a visual node-based interface. While a basic understanding of JSON and API structures is helpful, the platform is designed to allow business professionals to build complex automations without writing traditional code.

Can automation replace a professional SEO audit?

Automation enhances the auditing process by handling the repetitive data collection and monitoring tasks. However, it does not replace the need for a professional strategist to interpret the data, identify complex patterns, and set the overarching creative direction.

What are the most critical KPIs to monitor in an automated system?

The most vital indicators include crawl error rates, Core Web Vitals performance, keyword ranking distribution, and organic click-through rate. Monitoring these ensures both the technical accessibility and the competitive relevance of your content.

The Path Toward Algorithmic Efficiency

Auditing your current manual processes is the necessary precursor to implementing a high-performance automation suite. Identify the specific SEO tasks that consume the most internal resources or are most susceptible to human error, and target these as the first candidates for automation. By systematically replacing manual chaos with structured workflows, you build a scalable foundation that adapts to the complexities of search algorithms in real time.