Key Takeaways: Why AI Lead Scoring is a Strategic Imperative

AI-powered lead scoring replaces manual intuition with data-driven weights, reducing time wasted on unqualified prospects by up to 30% and increasing sales team morale. This shift from subjective guessing to algorithmic precision fundamentally transforms how your organization identifies high-value opportunities. Have you considered the hidden cost of your sales team manually sifting through lukewarm inquiries? For a typical 10-person B2B sales team, implementing automated scoring can reduce weekly “lead cleanup” meetings from four hours to just thirty minutes, reclaiming valuable time for actual closing activities.

Beyond simple time savings, this technology establishes a rigorous framework for growth that scales without requiring proportional increases in headcount. By integrating behavioral data and firmographic markers, businesses move from a reactive posture to a proactive strategy. This evolution ensures that your most talented closers focus exclusively on accounts with the highest propensity to convert, effectively maximizing the return on every marketing dollar spent. A systematic approach eliminates the friction often found at the hand-off point between departments.

This transition toward automated systems provides several distinct advantages for small and medium-sized enterprises seeking to optimize their resources:

- Mitigation of Qualification Fatigue: Automation removes the cognitive burden of evaluating hundreds of low-intent signals, preventing burnout and ensuring high-value leads receive immediate attention.

- Standardization of Sales Qualified Leads (SQLs): By establishing a data-backed threshold for readiness, both marketing and sales operate under a single, objective definition of what constitutes a “hot” lead.

- Real-Time Prioritization: AI models update scores instantly based on prospect behavior, allowing your team to strike while interest is at its peak rather than waiting for manual batch processing.

- Enhanced Predictive Accuracy: Machine learning identifies subtle patterns in conversion data that human observers often overlook, leading to more reliable revenue forecasting.

- Operational Efficiency: Low-code integrations allow these systems to function autonomously, syncing data across CRM platforms without requiring constant manual oversight or technical intervention.

Adopting these automated systems is no longer a luxury but a fundamental requirement for any business aiming to maintain a competitive edge in an increasingly data-centric market.

The Hidden Cost of ‘Gut Feeling’ Lead Qualification

The cost of manual lead qualification includes wasted salary on ‘chasing ghosts,’ pipeline bloat that obscures real deals, and decreased sales team morale due to low win rates. Relying on intuition rather than data causes teams to prioritize volume over value. This creates a deceptive sense of activity that masks a stagnant conversion rate. Small teams simply cannot afford this inefficiency. Every hour spent on a dead-end lead is an hour stolen from a high-intent prospect.

Consider a sales manager spending 2 hours every morning manually cross-referencing new signups against LinkedIn profiles to guess their budget. This labor-intensive process (often prone to human error) represents a significant drain on high-level talent. Instead of refining closing strategies or nurturing relationships, skilled professionals are reduced to data entry clerks. The financial impact is clear: if a senior rep earns $100,000 annually, losing 25% of their time to manual research equates to $25,000 in lost productivity every year.

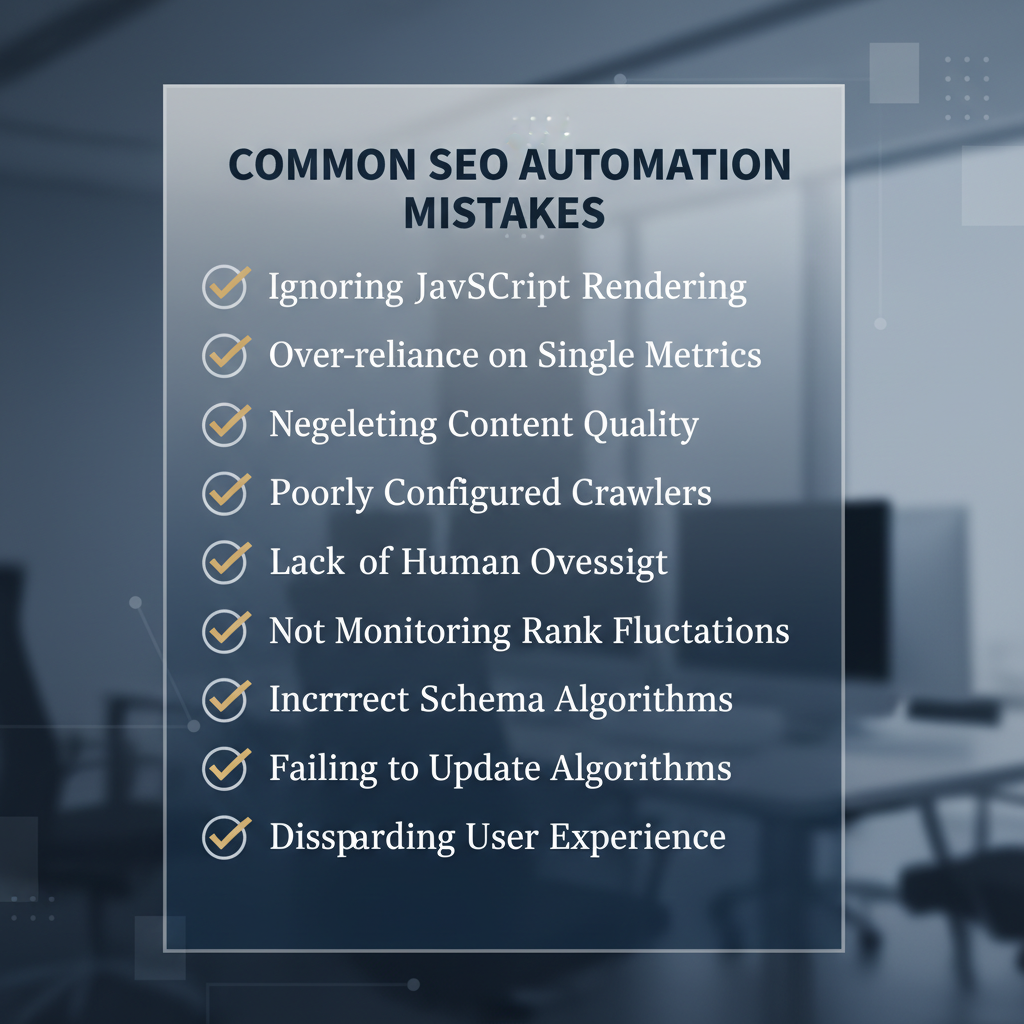

Pipeline bloat occurs when unqualified leads linger in the CRM because nobody has the data to disqualify them. This clutter makes it impossible for leadership to forecast revenue accurately, leading to missed targets and misallocated budgets. It also leads to burnout. Sales representatives thrive on winning. When their days are filled with rejection from prospects who were never a fit to begin with, motivation plummets. A demoralized team is a stagnant team, and stagnation is often the precursor to high turnover rates in competitive sales environments.

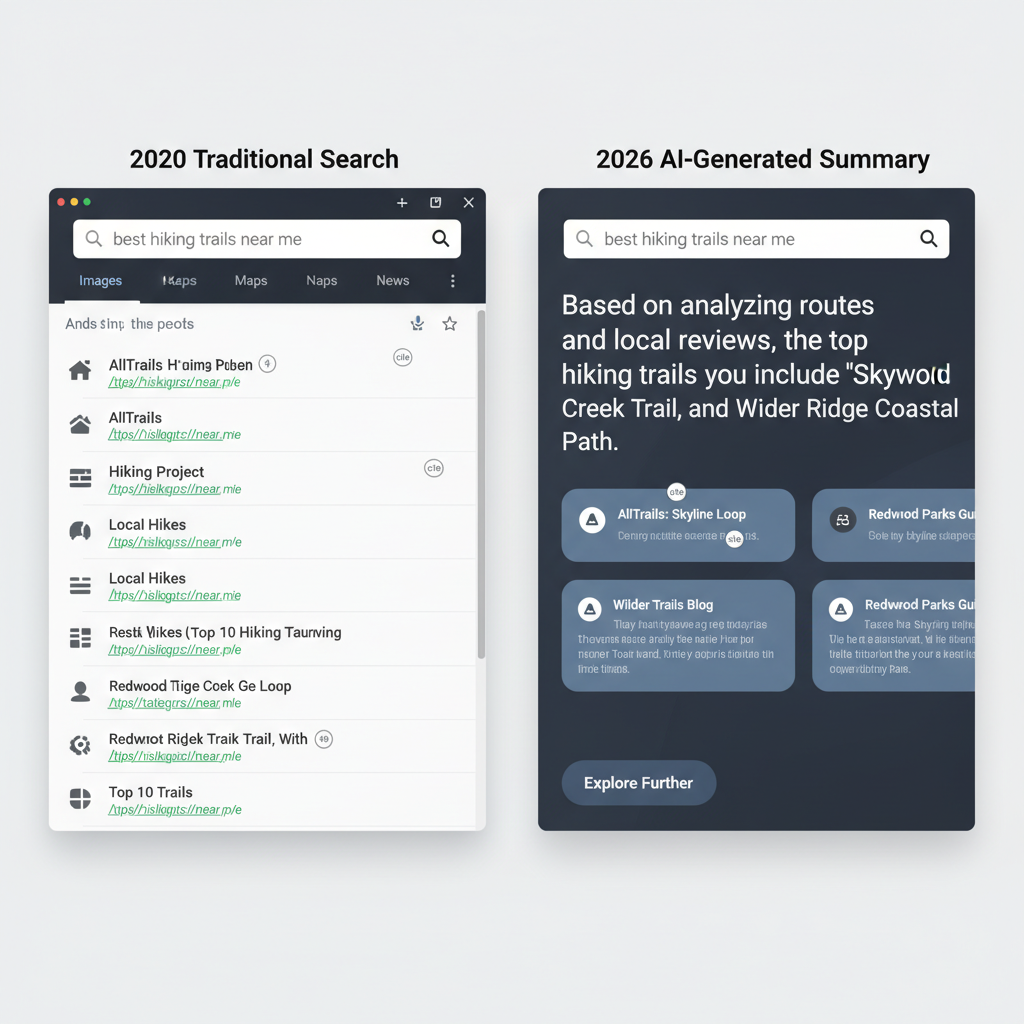

Opportunity costs extend beyond salary. There is the “cost of delay” for legitimate prospects who wait in line while staff “chase ghosts.” In competitive markets, a four-hour delay in response time can be the difference between a closed deal and a lost opportunity. Manual systems are inherently slow. They lack the agility to react to intent signals in real-time, such as a prospect visiting a pricing page multiple times or downloading a specific case study. Without automated triggers, these signals go unnoticed.

Relying on a “gut feeling” is no longer a viable business strategy. It is a liability. Total lack of scalability. By quantifying these losses, organizations can see that automation is not just a luxury—it is a survival mechanism for the modern sales floor. Transitioning to a data-driven model ensures that every minute of a salesperson’s day is directed toward revenue-generating activities rather than administrative guesswork.

Defining the Data Points that Actually Predict Conversion

Effective lead scoring data points include firmographics such as company size and industry, behavioral data like pricing page visits, and technographics which identify a prospect’s current software stack. This requires a structured taxonomy to differentiate between casual browsers and genuine buyers. Firmographic data serves as the foundational filter, ensuring that a lead fits the ideal customer profile. If a lead originates from an enterprise with 500 employees but your service is for solo creators, a score must reflect that misalignment immediately.

Behavioral data provides the necessary context regarding a prospect’s current intent level. For instance, identifying that leads who visit the “Pricing” page three times in 48 hours are five times more likely to convert than those who only read blog posts allows for immediate prioritization. Technographics further refine this by revealing if a lead already utilizes compatible tools like n8n. Contextual data adds a final layer by examining the environment of an interaction, such as the referral source.

Distinguishing between high-intent actions and passive engagement is critical for resource management. Consider a micro-scenario: two leads download the same whitepaper. Lead A stops there, while Lead B proceeds to click a link in the follow-up email and views a “Request a Demo” video. Lead B demonstrates a specific progression through the funnel that warrants a higher score. High-intent actions are those that signal a readiness to buy, such as interacting with a ROI calculator or viewing technical documentation, rather than just consuming top-of-funnel educational content.

Data validity is inherently tied to time, a concept known as engagement decay. A lead who was highly active six months ago but has not opened an email since is no longer a hot prospect. AI-driven scoring systems automatically depreciate scores over time. This prevents the inflation of scores based on historical actions that no longer reflect current business needs or priorities.

Actually, it is a mistake to view these data points as a simple checklist where every action adds a flat value. True multi-dimensional scoring requires weighting these variables dynamically. A firmographic match is a prerequisite, but behavioral spikes are the triggers for outreach. By synthesizing firmographic, behavioral, and technographic inputs into a single, evolving metric, SMEs can replace manual chaos with a predictable pipeline. This strategy ensures that every notification sent to a sales representative represents a genuine opportunity rather than a statistical anomaly.

Building a Scoring Model Without a Data Science Team

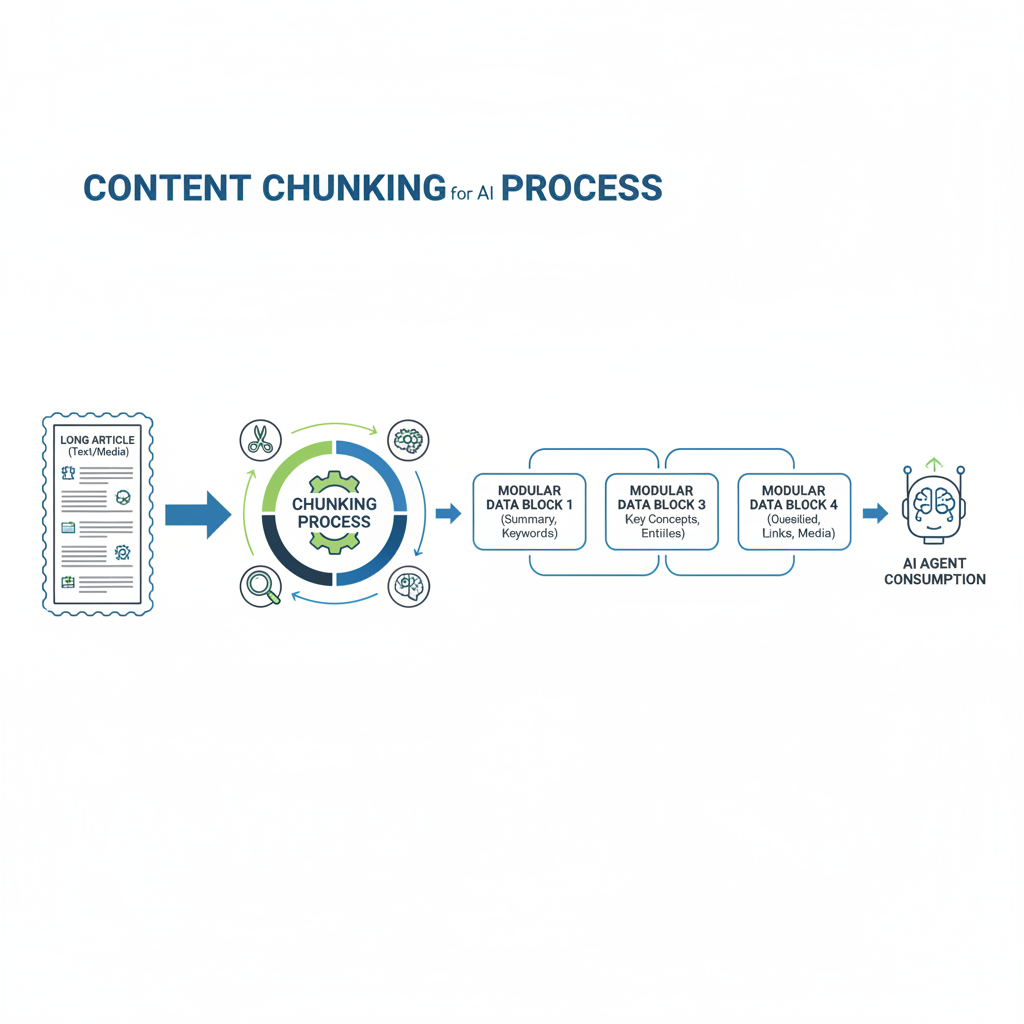

Small teams can build lead scoring models using simple weighted logic (e.g., +10 points for a specific job title) and LLMs to categorize unstructured data like company descriptions. Building a scoring model no longer requires a PhD in data science because low-code tools provide the necessary infrastructure. By translating business intuition into mathematical weights, any founder or sales lead can create a system that prioritizes high-value prospects automatically.

The process begins with a simple weighted point system where specific actions or attributes receive a numerical value based on their historical correlation with closed deals. LLMs enhance this. They read through unstructured data—such as a LinkedIn bio—to assign categories that would otherwise require manual review. This combination of rigid logic and flexible AI creates a sophisticated filter for any small team.

I recall a founder who mapped out his entire lead qualification process on a basic spreadsheet before moving it into an automation platform. He assigned points to job titles like ‘VP of Operations’ and subtracted points for students or competitors. Once he mirrored this logic in an n8n workflow, the system handled the heavy lifting (and frankly, it was more consistent than his morning coffee habit). This transition from a static sheet to a live, automated scoring engine is where the real efficiency gains happen for SMEs.

Iterative refinement is far more critical than achieving initial perfection. Your first model will likely be a bit clunky, but that is perfectly acceptable as long as you start. By comparing actual conversions against initial scores, you can refine the underlying weights over time. If high-scoring leads fail to close, simply lower the point value for those specific attributes while raising others. This constant feedback loop ensures the model evolves alongside your market understanding (without needing to write a single line of Python).

Adopting these low-code scoring systems is a strategic imperative for any business that cannot afford to waste time on low-intent inquiries. While larger competitors might spend months developing custom algorithms, a small team can deploy a functional LLM-based categorizer in a single afternoon. Speed and adaptability are the primary advantages here. By focusing on practical application rather than theoretical accuracy, organizations move from manual chaos to a structured, data-driven sales process that scales without increasing headcount.

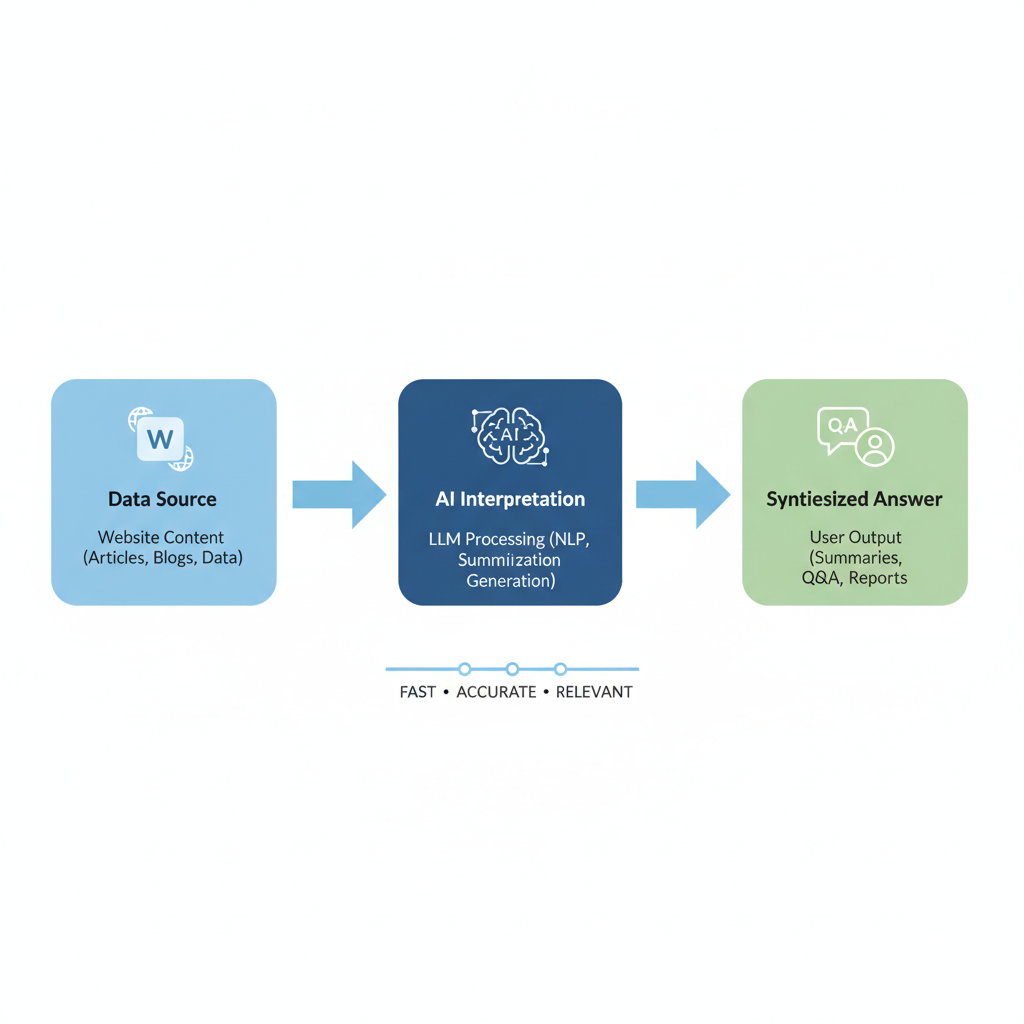

Automating the Pipeline: Using n8n to Centralize Lead Intelligence

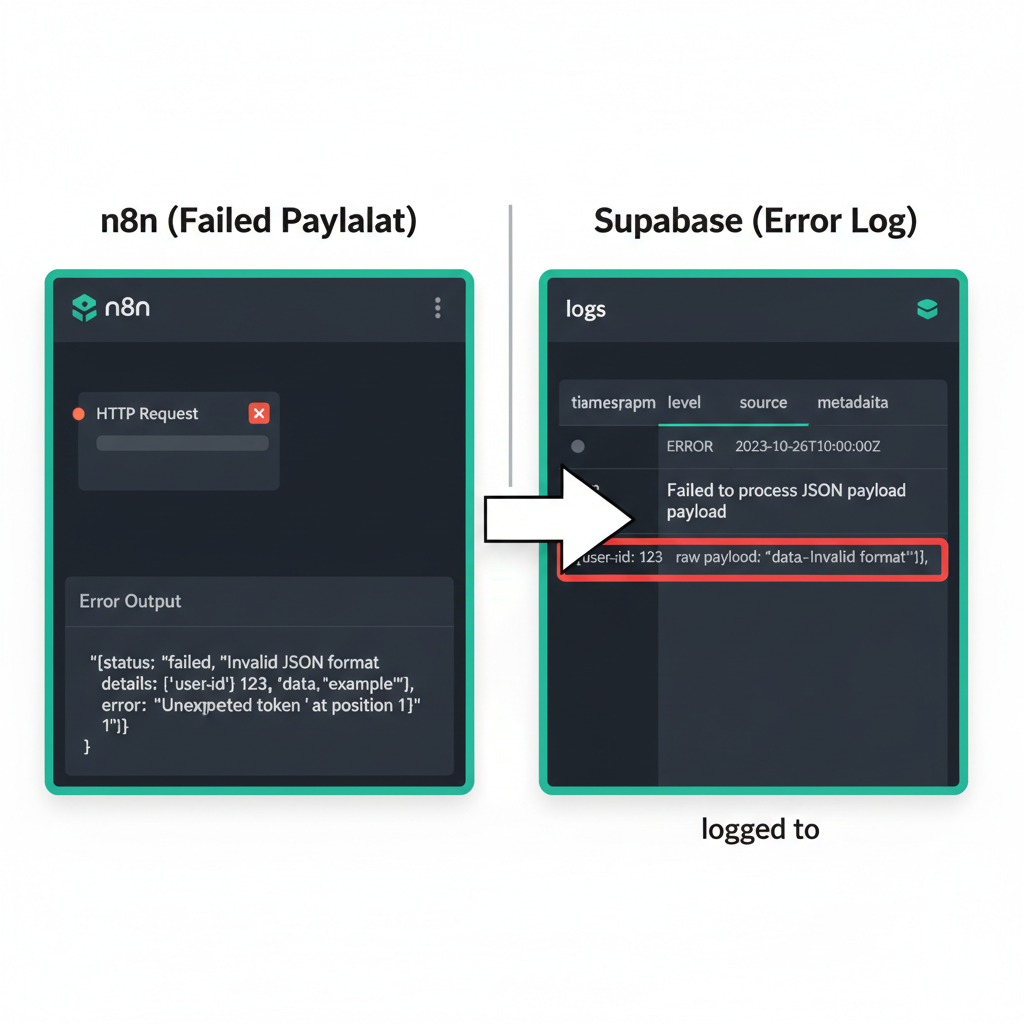

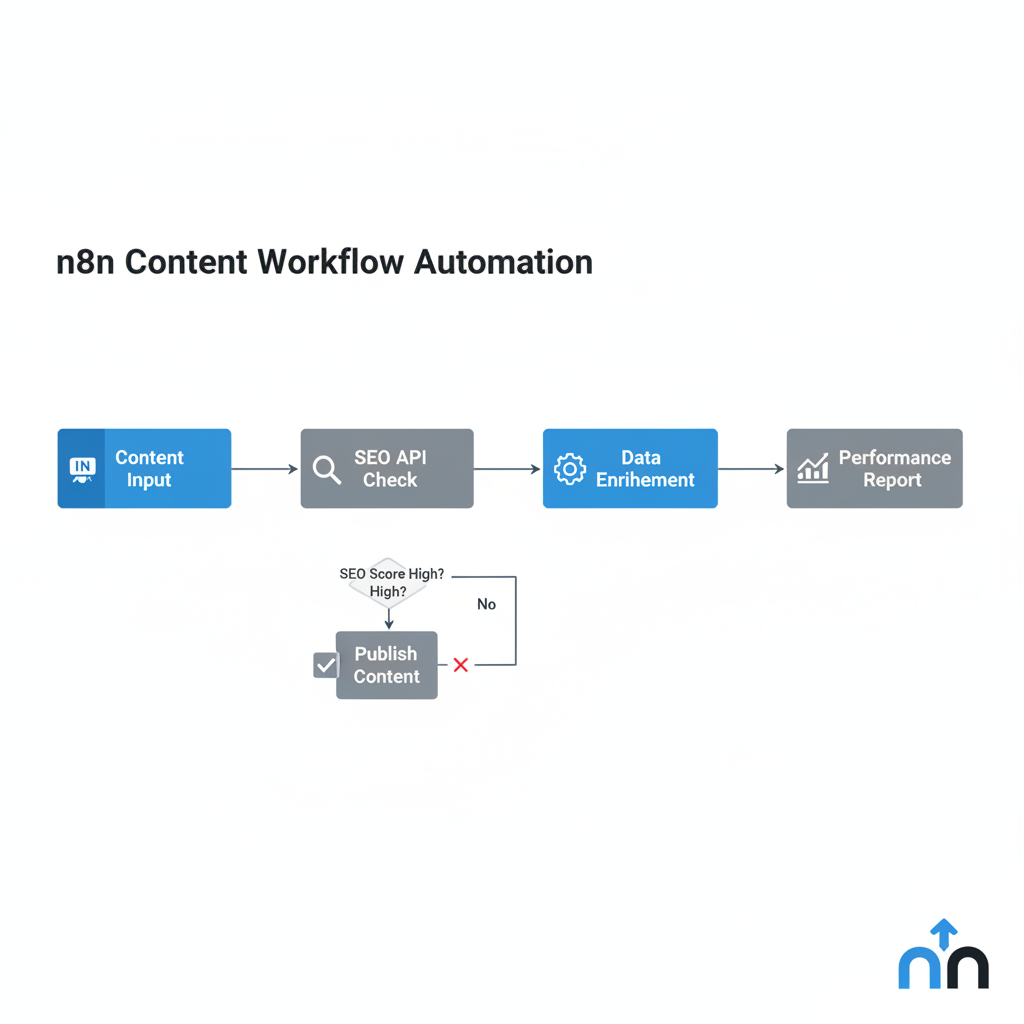

n8n acts as the central hub for lead scoring by pulling data from CRMs, webhooks, and enrichment tools, calculating the score, and pushing notifications to Slack or email. This orchestration layer eliminates the manual overhead of moving data between disparate platforms. By integrating your existing tech stack into a single workflow, you ensure that every lead is processed against the same logic instantly. Imagine a lead arrives via a web form; n8n immediately captures that webhook and initiates a sequence of API calls. It queries external databases to verify company size and revenue before the lead reaches your sales team. This approach transforms fragmented data points into a unified intelligence stream. You no longer need to check multiple tabs to understand a prospect’s value.

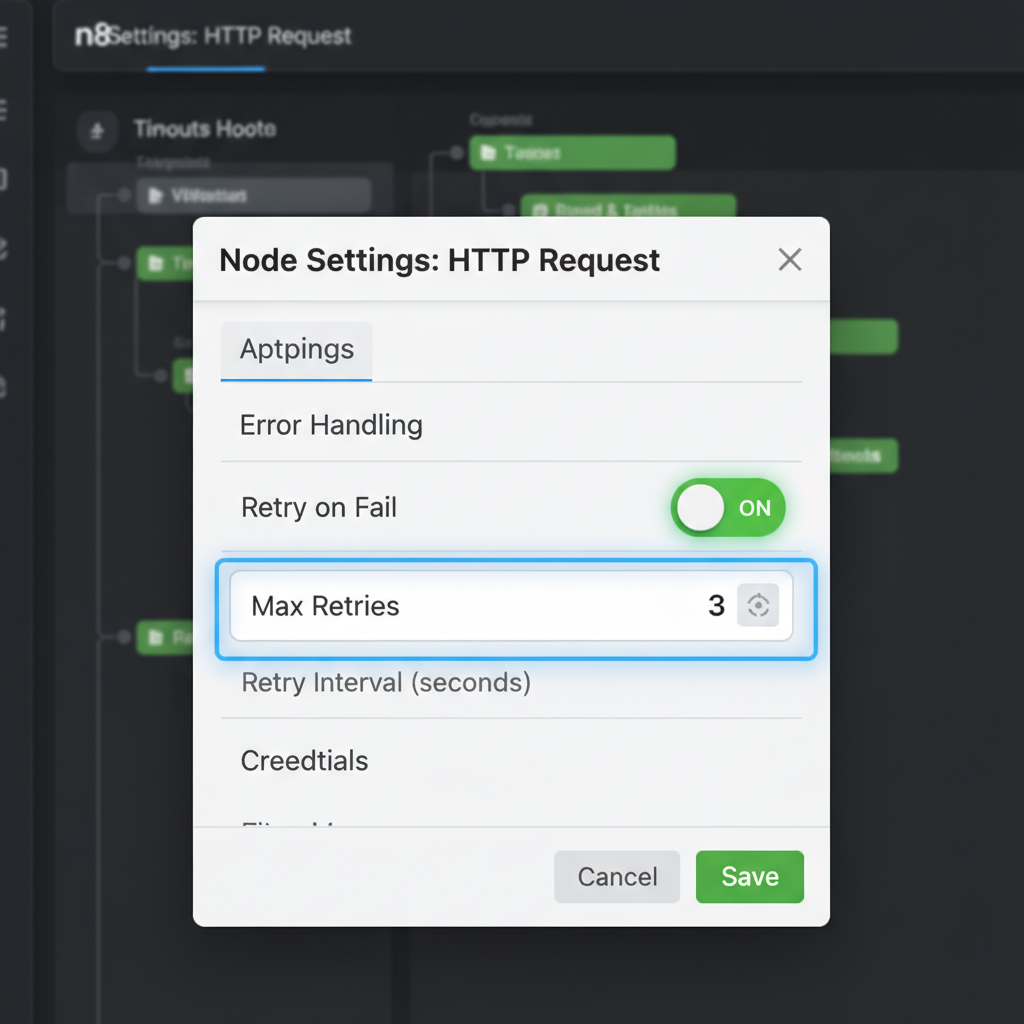

The architecture of an effective automation pipeline relies on precise triggers. An n8n workflow that triggers when a Typeform is submitted, enriches the data via an API, calculates a score, and creates a high-priority task in HubSpot represents the standard for small teams.

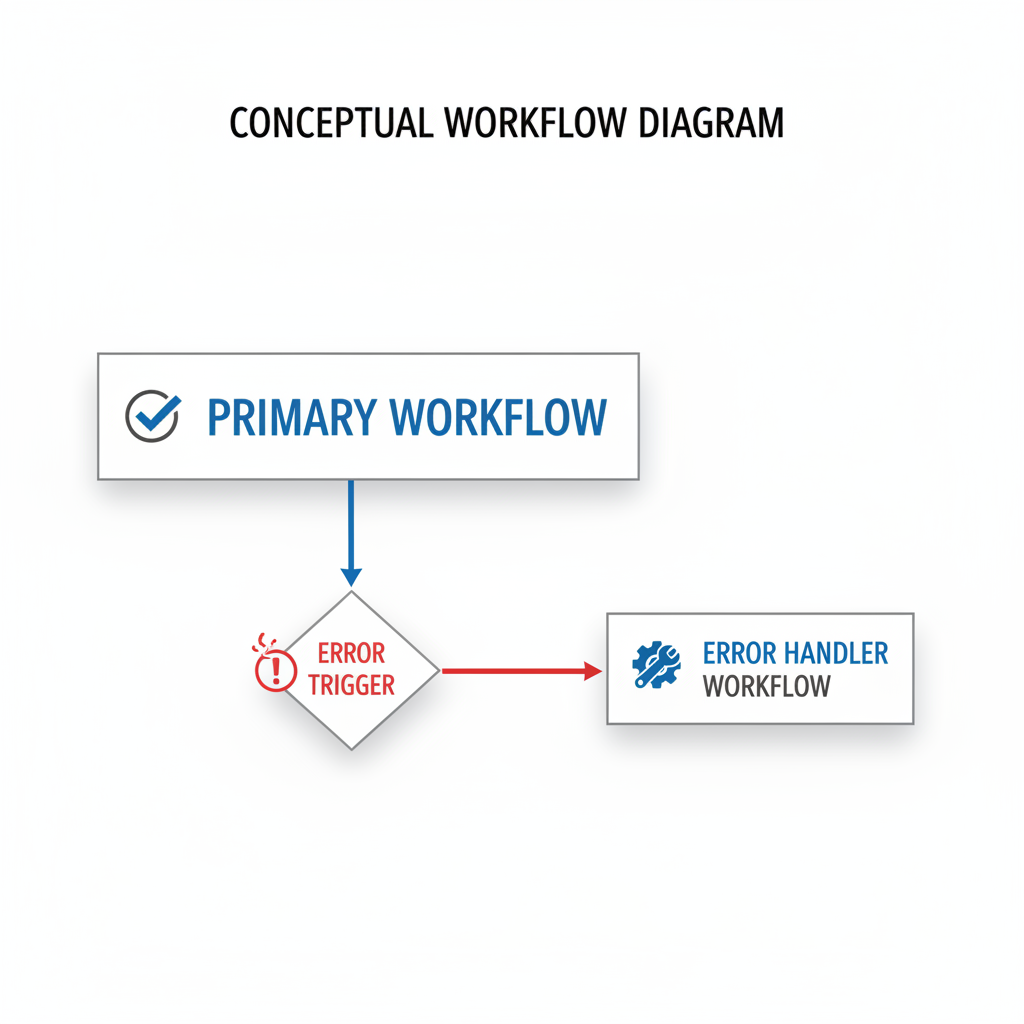

Conditional routing is where the system truly demonstrates its strategic value. Based on the calculated score, n8n uses “If” nodes to determine the next immediate action. High-value “hot” leads—those matching your ideal customer profile with high intent—trigger urgent notifications in Slack. This ensures your best sales reps engage within minutes. Lower-scoring “warm” leads follow a different path. Perhaps they are added to a specific email nurturing sequence in Mailchimp or assigned a lower priority in the CRM. You control the logic. No lead gets lost in the shuffle. By automating these decisions, you maintain a high velocity for top-tier opportunities while keeping the rest of the pipeline moving through automated touchpoints.

Consider the technical flow beyond the initial trigger. Once the form submission hits the webhook node, the workflow branches. One path might ping a service like Clearbit or Apollo to fetch missing firmographic details. Another path could check your internal CRM history to see if the contact has interacted with your brand before. These variables then feed into a “Function” node where the scoring math happens. It is a clean, repeatable process that removes human bias. Centralizing lead intelligence through n8n provides a level of operational efficiency usually reserved for enterprise-level corporations. You gain total visibility into your pipeline health. Think about the time saved. Instead of manually researching every LinkedIn profile that downloads a whitepaper, your system does it in seconds. This isn’t just about speed; it is about consistency. Every lead is treated with the same rigorous standard, ensuring that no high-potential account slips through the cracks. Efficiency at scale.

Implementation Roadmap: From Zero to Automated in 7 Days

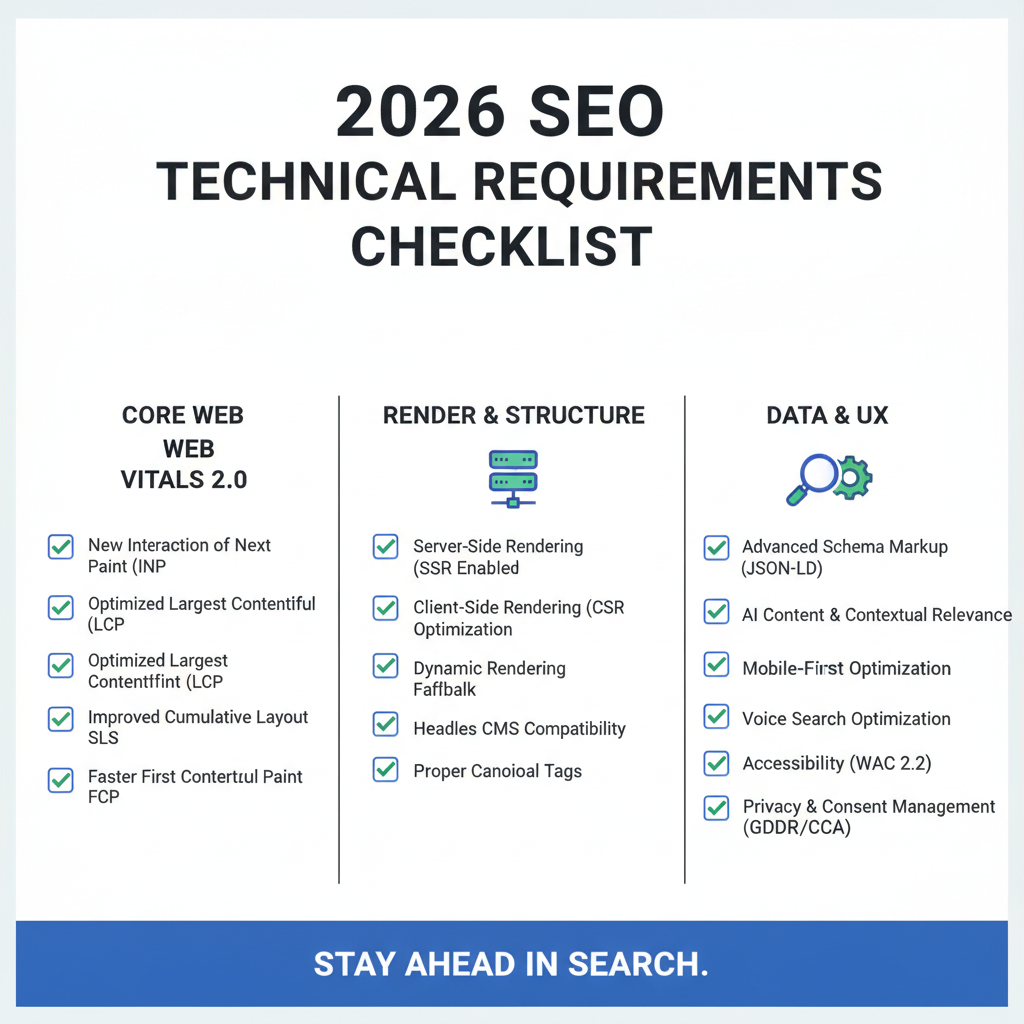

A basic AI lead scoring system can be implemented in one week: 2 days for data auditing, 2 days for n8n workflow construction, and 1 day for testing and rollout. This rapid deployment allows small teams to move from subjective guesswork to data-driven prioritization without months of development. By focusing on a “Minimum Viable Scoring” model, an SME leader can identify the highest-value signals—like job title or pricing page visits—and map them to specific point values.

Imagine a sales manager setting up this logic on a Tuesday afternoon and receiving their first high-priority Slack alert by Thursday morning. This speed is possible because modern low-code tools bypass the need for custom coding. Instead of waiting for a perfect data set, the focus shifts to immediate utility. Actually, wait—it is better to say the focus shifts to iterative improvement rather than total perfection. Why let a messy CRM prevent you from identifying your best prospects right now? This 7-day roadmap ensures that the transition from manual chaos to a structured, automated engine happens with minimal friction.

The first 48 hours are dedicated to the data audit. You must identify where your lead data lives and which fields are actually reliable. Is the “Industry” field consistently filled, or should you rely on email domains? Once the data sources are mapped, the next two days involve building the n8n workflow. This is where the logic lives—connecting your CRM to an LLM that evaluates the lead’s intent and fit. You are not just moving data; you are creating a digital brain that works while your team sleeps.

Days five and six focus on stress testing and internal alignment. Before the system goes live, run historical leads through the workflow to see if the AI scores them as your top reps would. This calibration phase prevents the “garbage in, garbage out” trap that often plagues poorly planned automations. It is a moment for self-correction: if the scores feel off, you adjust the weighting of the criteria immediately to ensure accuracy.

On the final day, the team is onboarded. This isn’t a complex technical training but a strategic briefing on how to handle the new alerts. When a “Hot Lead” notification hits a rep’s screen, they should know exactly which talking points the AI suggested. By the end of the week, the manual burden vanishes, replaced by a system that scales with your growth and keeps the sales pipeline moving efficiently.

Frequently Asked Questions About AI Lead Scoring

What are the most common concerns regarding AI lead scoring?

Common questions about AI lead scoring include: Do I need a large dataset? (No, start with rules-based scoring), Is it expensive? (n8n makes it highly cost-effective), and Does it replace sales reps? (No, it empowers them). Implementation triggers hesitation among founders worried about technical complexity. However, the barrier to entry is lower than most assume, especially when using low-code tools that bypass expensive data science teams. Small teams can begin with basic heuristics and introduce machine learning as lead volume grows.

Starting small allows you to validate logic before committing to advanced models. By utilizing n8n, businesses connect existing CRMs to an AI engine without massive overhead. This modular approach ensures automation grows with revenue, providing a scalable framework. Rather than a rigid black box, modern scoring functions as a flexible extension of your sales strategy, ensuring no high-value prospect slips through the cracks due to fatigue.

What if the AI misses a high-value lead?

No one wants to lose a “diamond in the rough” because an algorithm flagged a non-standard email domain. To prevent this, we implement a safety net using manual overrides. If a lead meets high-intent criteria but fails the AI scoring, the system flags it for review. Technology serves as a filter, not a barrier, allowing reps to maintain the final word on quality.

Is AI lead scoring too expensive for a small business?

Enterprise solutions carry five-figure price tags, but low-code has changed the math. Using n8n for orchestration allows you to pay only for the compute and API calls you use. You aren’t locked into bloated subscriptions. This cost-effective structure (a relief for bootstrapped founders) makes sophisticated automation accessible to any team, allowing you to compete with larger organizations without their budgets.

How much data is required to begin?

You do not need millions of rows of historical data. Many implementations begin with a “rules-plus-AI” hybrid. You define basic parameters like industry or job title, while the AI analyzes the nuance of form responses. As your CRM fills, the system learns from closed-won deals. It is better to start with a functional system today than to wait for a “perfect” dataset.

Action Steps: Moving from Manual Chaos to a Working System

To start with AI lead scoring, first audit your last 20 closed-won deals to identify common traits, then sign up for an n8n trial to begin mapping your first automated workflow. This initial audit serves as the foundation for your scoring logic, ensuring your automation reflects actual market success rather than guesswork. Identifying the specific industries, job titles, or pain points shared by your most profitable clients allows the system to prioritize similar leads with surgical precision.

Once these patterns emerge, the transition from manual chaos to a structured system becomes a matter of technical execution. Imagine a founder opening their CRM and tagging their top 10 customers to find the “common thread” for their first scoring rule. This small, deliberate action transforms a cluttered database into a strategic asset. By mapping these traits into a low-code environment, small teams can effectively replicate the sophisticated lead management capabilities once reserved for enterprise-level organizations.

The path toward operational efficiency begins with three concrete steps. First, define your Ideal Customer Profile (ICP) by documenting the firmographics and behaviors that characterize your best buyers. Second, select a low-code platform like n8n to connect your lead sources with your CRM. Third, build a simple scoring pilot that assigns points based on just two or three high-value attributes.

Transitioning to an automated scoring model is not merely a technical upgrade; it is a strategic pivot. Small sales teams no longer need to drown in unqualified inquiries. By implementing these steps, you replace intuition with data-driven clarity. Start today. Build the system that allows your team to focus on closing deals instead of sorting through the noise.